Biden White House Pressured Meta To Moderate Texts On WhatsApp

Government attempts at censoring public comments on social media have been in the news recently. But a little-known effort was also made to affect text messaging about Covid vaccines

Much has been made of the revelations in the Twitter Files about pressure on Twitter, along with other social media platforms, to suppress certain posts and users according to the government’s wishes. Yet there is a piece to this story that in some regards is far more alarming: the White House’s interest in content moderation on a private speech platform.

I gained access to emails between White House staffers and Meta executives about WhatsApp that underlie this investigative report. The emails were obtained through discovery in Missouri v Biden, a first amendment case brought by two attorneys general, and New Civil Liberties Alliance (a nonprofit public interest law firm) on behalf of private plaintiffs.

As early as January 26, 2021, almost immediately after Biden took office, communications between the White House and Meta were underway regarding content moderation. Of specific concern was vaccine hesitancy and how Meta would combat this across its multiple platforms, including Facebook and Instagram. But amid the copious correspondence that I reviewed about those platforms, something jumped out at me: repeated queries about another Meta property, WhatsApp, a service designed for private messaging.

Like at Twitter, the moderation campaigns at Facebook and Instagram were conducted because of their technical feasibility and the inherent social nature of the platforms—posts are public and Meta could use various methods to monitor and suppress them. But WhatsApp is fundamentally different. It is not designed for people to build an audience or to share content widely. Rather, it is used for direct, personal communications—between family, friends, a doctor and a patient, and so on. According to Meta, 90% of WhatsApp messages are from one person to another. And groups typically have fewer than 10 people.

Questioning Meta executives about what actions could be taken on a service that people use specifically for private communications is a striking departure from other efforts.

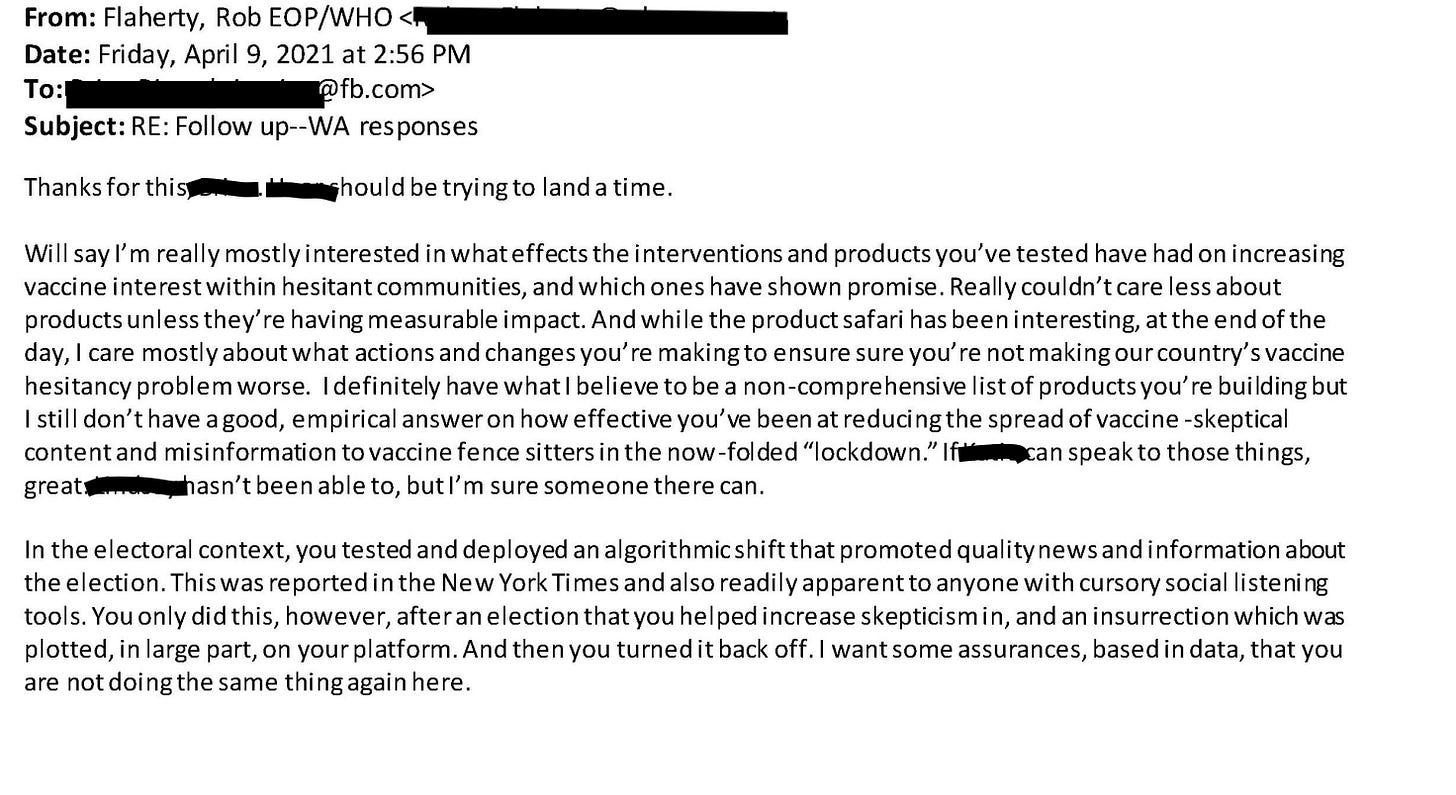

In multiple emails, as early as March 2021, Rob Flaherty, the Biden White House’s Director of Digital Strategy, pressed Meta executives to tell him what interventions the company had taken on WhatsApp.

Flaherty wanted to know what they were doing to reduce harm on the messaging app. Seemingly dissatisfied with earlier explanations, on March 22, 2021, he wrote, “If you can’t see the message, I’m genuinely curious—how do you know what kinds of messages you’ve cut down on?”

Referred to as “Andy” in the email, Andrew Slavitt, at the time the White House Senior Advisor on the Covid Response, was also on the email chain. Getting Meta to moderate its content was enough of a priority for Slavitt and Flaherty that Slavitt was willing to get on the phone with a Meta executive a “couple of times per week” if necessary.

Because of WhatsApp’s structure, targeted suppression or censorship of certain information did not appear possible. Instead, much of the aim of the content moderation on WhatsApp, therefore, was to “push” information to users. The service partnered with the World Health Organization, UNICEF, and more than 100 governments and health ministries to send Covid-19 updates and vaccine-related messages to users. The company created initiatives such as a WhatsApp chat bot in Spanish to aid in making local vaccination appointments.

Flaherty appeared to understand the limitations of the platform but nevertheless continued to query about it. In an exchange titled “additional context re: WhatsApp,” six days after Flaherty’s March 22 email, a Meta employee responded to Flaherty’s questions, explaining that WhatsApp is for private messaging, a key difference from social media platforms Facebook and Instagram.

Flaherty replied that he was “very aware” and added a smiley face.

The suppression tools at their disposal—specifically, labeling and limiting message forwards—the Meta employee explained, were blunt “content-agnostic” interventions. The premise was that messages that didn’t originate from a close contact were more likely to contain misinformation. So by preventing virality in general, the company was automatically helping to prevent misinformation.

Flaherty then asked how they measured success. Was it from a reduction in forwarded messages? Was there a way to measure impact across company properties?

In a reply [blue text in image below] a Meta employee said reducing forwards was just one measure of lowering virality on WhatsApp. They also banned accounts that engaged in mass marketing and scams, including those related to Covid-19 misinformation. And they tracked engagement through proactive work such as WhatsApp’s connection with governments and nonprofits sending 3 billion Covid-19 messages through the service.

In one of the follow up exchanges, Flaherty seemed dissatisfied with the response, and again pressed Meta to take action on vaccine hesitancy. “I care mostly about what actions and changes you’re making to ensure you’re not making our country’s vaccine hesitancy problem worse,” he wrote. “I still don’t have a good, empirical answer on how effective you’ve been at reducing the spread of vaccine-skeptical content and misinformation to vaccine fence sitters.”

The email subject was “WA responses” (i.e. WhatsApp responses), and, as a point of comparison, Flaherty referenced Facebook’s algorithm shift during the election to promote “quality news,” and chided Facebook for providing the platform where, he said, the insurrection was, in large part, plotted. But the algorithm shift apparently was then switched off. Flaherty said he wanted assurances that this apparent backing away from content moderation wasn’t also happening on WhatsApp.

Flaherty wanted empirical data about the effectiveness of reducing “vaccine-skeptical content” on a platform composed of non-public messages. He wanted supposed misinformation on a private messaging app to be “under control.” What, exactly, was he hoping to get Meta to do?

It was obvious from the start that WhatsApp’s interface didn’t allow for the granular control Flaherty appeared to desire. And his smiley face response suggests he well understood this. Yet he kept badgering the Meta executives anyway.

The exchanges about WhatsApp are arresting not because of what Meta ultimately did or did not do on the platform—since the company’s options for intervention appear to be limited—but because efforts to moderate content on a private messaging service was a continued interest for a White House official at all.

It goes without saying that our health authorities have often implemented very different policies from their counterparts in many other nations. A number of Western countries have far more reserved guidance on Covid vaccines and children, for example, than ours does. If a European health professional using WhatsApp expressed skepticism about vaccinating kids, perhaps simply echoing his country’s policy, would that have been considered misinformation? If it was up to the White House, apparently the answer would have been yes. Fortunately, targeted censorship on a private messaging app is still out of government reach.

I want to say this is shocking, but it’s not. What is shocking is my fellow liberal friends couldn’t care less about the censorship machine.

There are presently 225 deaths in the CDC’s VAERS database for Pfizer lot #en6201, the lot that killed my wife’s best friend. That is one lot of this unsafe, experimental and ineffective grift of a product. Our friend died 4/6/21. We reported it to VAERS almost immediately. By December of 2021 there were 90 deaths for that single lot. Today there are 225 deaths and 4000 adverse events for that ONE LOT! This morning I got a report from the FDA of some bad eye drops. Yesterday there was a product recalled over a non-fatal hep A outbreak. But here we are 2 years on since we reported the death of our friend to VAERS and not a peep from the parasites at the FDA and CDC.

Slavitt, Walensky and all the health officials and their degenerate partners at Meta and elsewhere should be in prison for stifling communications around the dangers of this poison. Every single vaccine injury and death that followed the early reports is on their hands. A public flogging is too good for them.